We are in the midst of a voice tech tsunami, with never-before-seen capabilities in automatic speech recognition (ASR) and off-the-shelf speech analysis tools available to everyone.

Freely-available software packages for measuring common features from speech are commonplace, as are tools for developing learning algorithms based on artificial intelligence (AI). In fact, it’s become so easy to implement these tools thathigh school science fairs are replete with digital voice analysis entries.

So how are we doing leveraging this technology for clinical applications in neurological disease, where we know speech changes are part-and-parcel of neurodegeneration? Can we make use of widely-available voice tech?

Let’s say we are interested in developing a new speech-based marker that tracks with the progression of Parkinson’s disease. How do we go about doing it?

Given the success that data-driven solutions based on AI have had in other domains, it’s tempting to consider them as a solution here.

The AI story would go something like this: record speech samples from people with Parkinson’s disease as their disease progresses. At the same time, collect some gold-standard measures of disease progression, for example, ratings on the United Parkinson’s Disease Rating Scale (UPDRS). Next, use supervised AI techniques to train a model to predict UPDRS ratings from the speech sample, and voila—you now have a tool for tracking the progression of Parkinson’s disease from speech samples. Simple, right?

Not quite. While the purely data-driven solution described above sounds straightforward, there are real challenges associated with implementing and disseminating clinical-grade speech analytics. We highlight the principal reasons for this below.

Training data isn’t readily available.

To train a model to recognize how speech changes in Parkinson’s disease, you need two things: examples of speech from people with different stages of Parkinson’s disease, and clinical measurements of the speaker’s disease stage. The high-performing, robust AI models we use every day (e.g. speech recognition) are trained on internet-scale data sets that have been carefully annotated. For example, see Amazon’s paper on training a speech recognition model with 1 million hours (wow!) of speech.

Data at this scale is not available for clinical applications.

In fact, for some applications, particularly those focused on rarely occurring diseases, the patient population simply does not exist to generate data at this scale. If it exists, it is not available in a single repository, and there are complex challenges associated with combining across data sources. More importantly, even if the speech data exists, the clinical data required for training the models (e.g. UPDRS scores in our example) is very expensive and difficult to generate.

Existing representations of speech may not be clinically-relevant.

People aren’t robots. The characteristics of our speech change for any number of innocent reasons. We might enunciate very clearly when ordering a drive-through latte, and then turn to our friend in the passenger seat and mumble, “whajawant?”. Add to that other speech variations that are a function of age, gender, dialect, language, geographic region, speaking style, and a host of other variables that are difficult to control for and are not related to disease. AI models are not particularly good at parsing out these “nuisance” variables from the variables of interest. So, a trained AI model that performs well on the UPDRS prediction task described above could very well be capturing differences in the demographics of the training data rather than clinically meaningful differences. This is such an important topic that we will have a stand-alone blog post at some point in the future.

Existing AI models are difficult to interpret.

Many existing models are based on “black-box” algorithms that simply provide a prediction without the ability to ask questions about why the prediction was made or to understand the limits of their predictive abilities. Even if we stay away from using difficult-to-interpret models (e.g. deep learning) and focus on simple, easy-to-interpret models (e.g. decision trees), these models only provide information on how important each input feature is for the prediction task at hand. If the input features themselves are uninterpretable, then it really doesn’t matter what model you use. After all, what can we possibly glean from a model that tells us the 9th MFCC coefficient and its derivative are important for predicting the UPDRS? How is the 9th MFCC coefficient related to the neurobiology of speech and language production? What does it mean if a PD drug induces a change in the 9th MFCC coefficient? Why is this clinically relevant?

Challenges with data distribution shift.

Machine learning models are very sensitive to distribution shift – a change in the statistical properties of the data encountered when the algorithm is deployed compared to the data used to train the algorithm. A recent reproducibility study finds that even when researchers follow the experimental design protocol to a T, there is still a distribution shift induced and this negatively impacts model performance resulting in a higher rate of incorrect predictions. In other words, even if an AI model achieves excellent performance on identifying Parkinson’s on its training data, the odds are that performance will be lower on different patients, especially if they have different genders, ages, dialects – even background noise conditions.

Incorrect predictions made by AI models in most consumer applications have negligible effects. If a speech recognition engine misunderstands your speech, you either ignore it or try again. Applications in healthcare are different and require a far higher bar of accuracy and transparency. Decisions made by AI models in the clinical domain are mission critical as they may have a direct impact on the health and safety of patients.

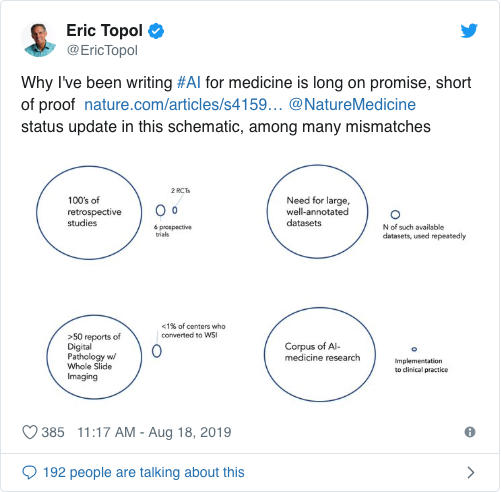

Dr. Eric Topol recently claimed on Twitter that AI for medicine is “long on promise, short of proof.” We agree, but we aim to change this. So how do we retain some of the virtues of AI while overcoming the challenges that limit its direct applicability in clinical speech applications?

One of the things that has always bothered us about a purely data-driven solution based on AI is that there is no natural way to integrate the scientific work that has already been done in this domain. Speech neuroscientists have been studying how various neurological diseases manifest themselves in speech for hundreds of years.

We overcome the challenges identified above by distilling the speech signal to clinically-relevant components through analytics that rely on our understanding of the human speech production mechanism and the neural basis that underlies it. A model-driven, transparent approach will bridge the gap between purely data-driven solutions and the manual speech assessment methods we discussed in the last post. Let’s dive into model-driven speech analytics next shall we?

Footnote: AI is an overloaded term whose definition has evolved and expanded over the years. We want to be a bit more specific about what we mean here. We are referring to a data-driven approach where high-dimensional speech features (or raw input samples) and clinical labels are used to train a model to predict the labels from the input speech. Some people call this machine learning, others call it supervised learning, others call it statistical learning. We use AI here because of how ubiquitous the term has become in the industry.